Table of Contents

How Tweet Hunter scaled to an 8-figure exit with AI21’s LLM

The Brand

Tweet Hunter is an all-in-one Twitter growth tool, designed to help users grow and monetize their Twitter audience. Their goal is to make it as easy as possible for users to create high-performing content, build an audience around their topics of expertise, and monetize opportunities.

The Story

The founders of Tweet Hunter started with an insane challenge: ship one new product every week till you find the one that fits.

“Generating revenue from the product was our number one, clear signal for validation.” says Thibault Louis-Lucas, founder of Tweet Hunter.

The team started with Twitter as a distribution channel for their products because they had an engaged following of 2,000 people. So they started building a product to help people with a Twitter following generate sales.

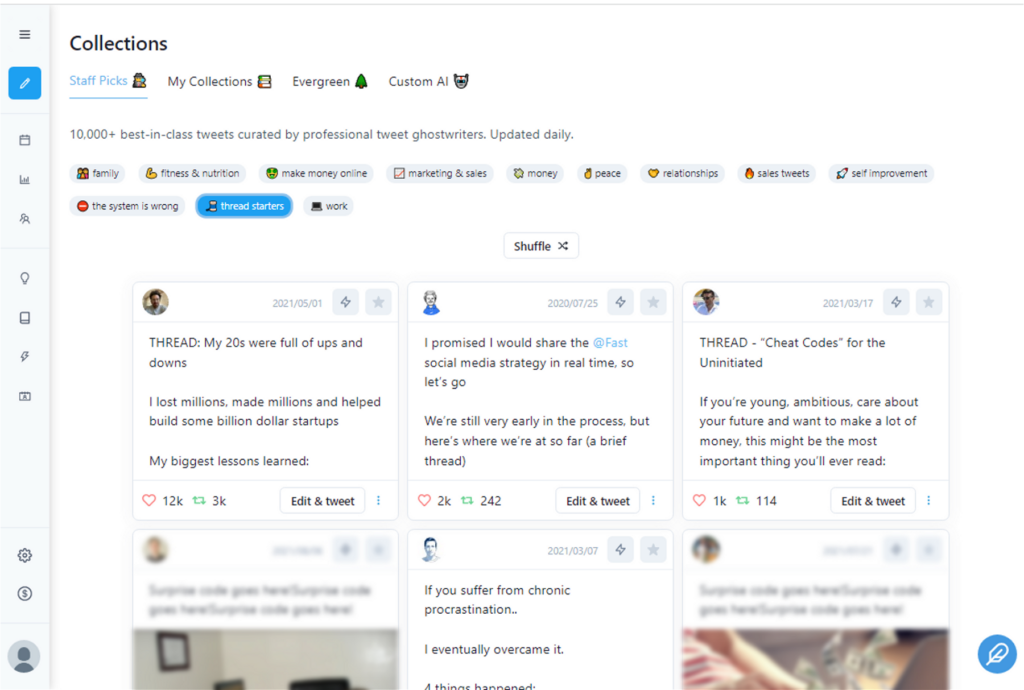

Their principle was: consistently great content was crucial to a creator’s growth on Twitter.

But they needed an LLM partner who could see their vision and fine tune for their specific use-cases.

Why AI21? Shared core values, flexibility, and excellence

Tweet Hunter was looking for ways to empower creators to write incredible content faster, by removing gruntwork from the equation. They wanted to create tools that help creators focus on the creative aspects instead of the mundane tasks like editing, collection and formatting. Tweet Hunter’s product needs were perfectly aligned with AI21’s mission: to help developers build AI-first writing experiences.

Switching from the legacy GPT-3, Tweet Hunter found this vision only in AI21.

“We could’ve switched back to GPT-3 but we stayed because of the flexibility that AI21 was providing with the fine tuning of models.” says Thibault.

One of the bigger differentiator for Tweet Hunter was AI21’s willingness to create a customized pricing plan to sync with Tweet Hunter’s usage and growth. Tweet Hunter saved significantly on customization costs because AI21’s pricing for custom fine-tuned model usage is the same as the foundation model usage. Other LLMs (such as Open AI’s) offer customization at approximately six times the cost of their foundational models.

With these criteria coming together perfectly, Tweet Hunter set out to optimize the product to have an 8-figure exit.

The first challenge: Generative AI for social media

Social media channels have strict specifications such as character limits, use of hashtags, and even tone of voice which makes effective generative AI in social media a challenging task, requiring intense experimentation and refinement.

Initially, this was a challenge for Tweet Hunter since their primary product offering was designed for Twitter.

AI21 offered a 3-click custom model training which Tweet Hunter effectively leveraged using their proprietary data to fine-tune a model for the exact capabilities they envisioned. AI21 were flexible with both their approach and features so Tweet Hunter could build a tool with multiple junctions for AI to assist.

The second challenge: too many distinct use cases

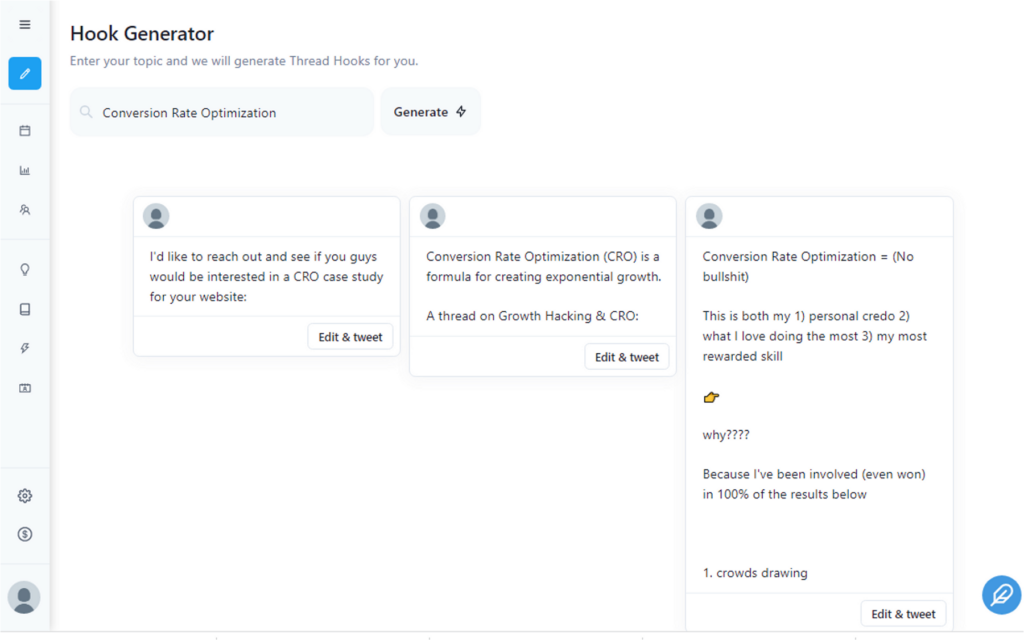

Writing engaging tweets presents a multi-level challenge: start with a creative hook, keep it succinct, and build an engaging experience for threads.

This means your AI text generator must be adaptable to different use cases. Also, each of these use cases need to integrate seamlessly while being mindful of the user experience.

“Twitter’s own user interface and algorithm is optimized for engagement and consumption, it’s not optimized to inspire you. And that’s a problem” says Thibault.

AI21 anticipated diverse use cases and built their large language model to be flexible and adaptable from the onset. As a result, Tweet Hunter could fine tune the model in three clicks, iterate quickly and reach success.

The resulting model was built with three distinct capabilities and two sub-capabilities

- Thread idea generator

- Hook generator

- Tweet writer

Sub-capabilities

- Tweet extender (expands on the tweet you’re already started writing)

Each of these features are presented in a fluid transition on the Tweet Hunter platform, which lead to over 5000 paying customers for the tool.

The third challenge: unreliable data to train large language models

Tweet Hunter’s founders had tried every method under the sun to consolidate relevant data, from using freelancers to creating spreadsheets themselves.

Not only was this exercise time intensive but it also produced inconsistent and unreliable results.

They were so disappointed after trying out multiple generative AI tools that they switched back to manual data collection. They were worried that poor quality examples would interfere with the very premise of Tweet Hunter: inspiration for great content.

Enter AI21.

The studio’s in-built functionality allowed Tweet Hunter to fine tune the model to learn from a diverse tweet database. AI21’s generated suggestions became exponentially better as the system evolved, and Tweet Hunter’s goal of helping creators write save-worthy tweets was achieved in a fraction of the time expected.

AI21’s custom model and proactive engagement throughout the journey was exactly what Tweet Hunter needed in a partner.

“We deeply appreciate AI21’s level of involvement with our product and needs in the early days. We were able to evaluate the model’s quality before production and maintain high standards on the quality of tweets.” says Thibault.

What changed for Tweet Hunter after working with AI21?

In the last two years, Thibault’s personal account grew from 2,000 followers to 60,000 followers. With Tweet Hunter’s scheduling feature and tweet generation tools powered by Ai21’s language models, he (and other users) could tweet frequently and effectively.

Tweet Hunter scaled to 1M ARR and an 8-figure exit in under a year.

“The content is very high quality. The tool works with diverse creators, varied niches, and complex topics. So most of the growth comes from the fact that our users are actually successful with the tool.” says Thibault.

The level of personalization possible with AI21 propelled Tweet Hunter to first place for multiple creators.

“Personalized, highly viral tweet formats developed with AI21 helped busy users share valuable content. That was the win. Our tool became 10 times faster with the AI integration.” says Thibault.

The road forward

With lempire’s acquisition of Tweet Hunter, the road forward is fast-paced and fascinating.

The team plans to introduce new features to the platform based on public feedback. AI21 is also set to be integrated with other products in lempire’s toolkit: personalized cold emails, automated follow-ups, and engagement with leads across multiple channels.