Table of Contents

Announcing Jurassic-2 and Task-Specific APIs

Our focus at AI21 Studio is to help developers and businesses leverage reading and writing AI to build real-world products with tangible value. Today marks two important milestones with the release of Jurassic-2 and Task-Specific APIs, empowering you to bring generative AI to production.

Jurassic-2 (or J2, as we like to call it) is the next generation of our foundation models with significant improvements in quality and new capabilities including zero-shot instruction-following, reduced latency, and multi-language support.

Task-specific APIs provide developers with industry-leading APIs that perform specialized reading and writing tasks out-of-the box.

Read on for an in-depth look at each.

Jurassic-2

We’re proud to present our brand new family of state-of-the-art Large Language Models. J2 not only improves upon Jurassic-1 (our previous generation models) in every aspect, but it also offers new features and capabilities that put it in a league of its own.

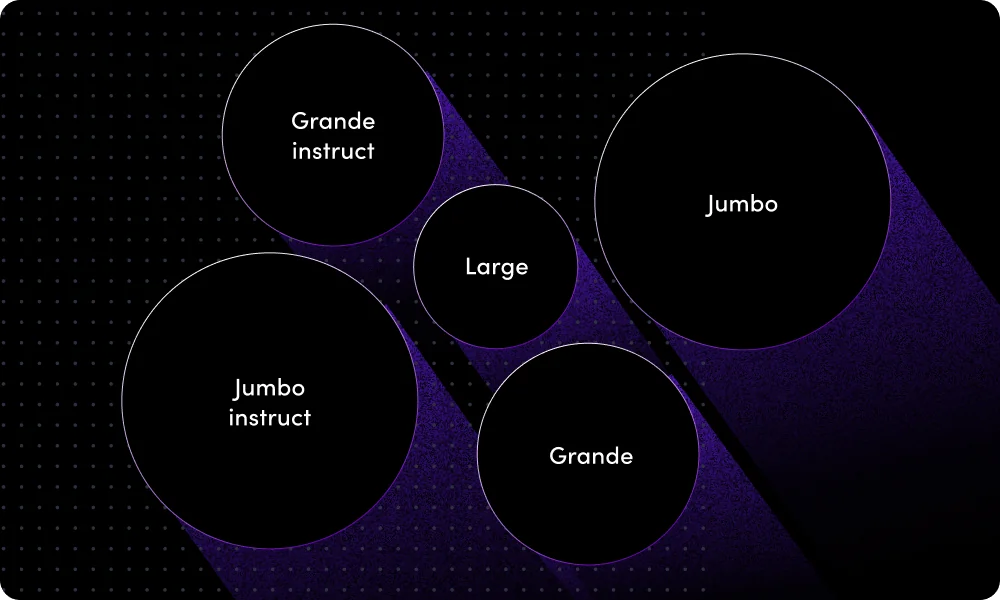

The Jurassic-2 family includes base language models in three different sizes: Large, Grande and Jumbo, alongside instruction-tuned language models for Jumbo and Grande.

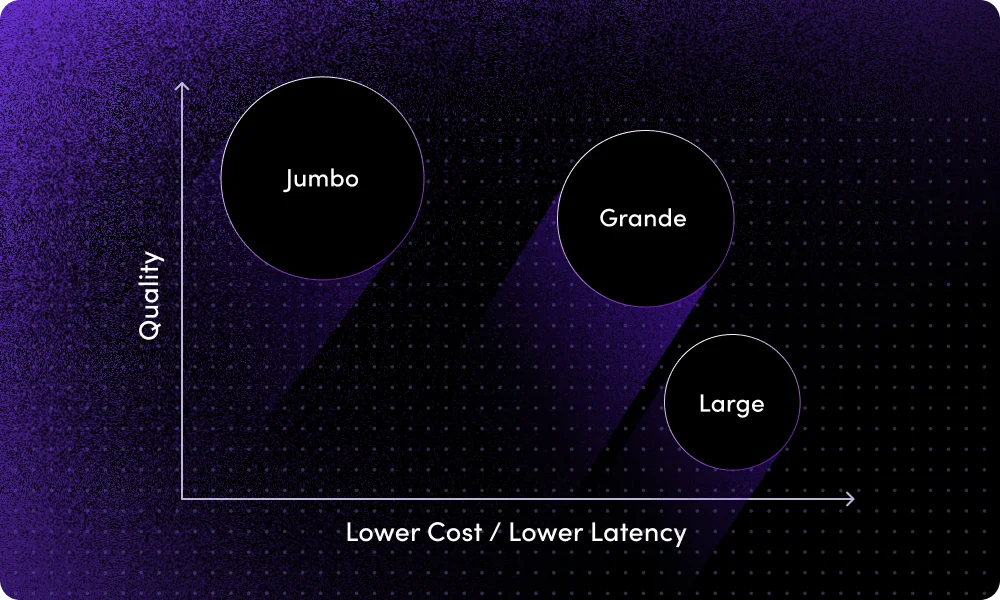

Jurassic is already making waves on Stanford’s Holistic Evaluation of Language Models (HELM), the leading benchmark for language models. Currently, J2 Jumbo ranks second (and climbing) according to an evaluation we conducted using HELM’s official repository. No less important, our mid-sized model (Grande) ranks significantly higher than models up to 30x larger in size, enabling users to optimize production costs and speed without needing to sacrifice quality.

What’s new compared to Jurassic-1?

Improved quality

With cutting-edge pre-training methods combined with the latest data (current up to mid-2022), J2’s Jumbo model has scored an 86.8% win-rate on HELM by our internal evaluations, solidifying it as a top-tier option in the LLM space.

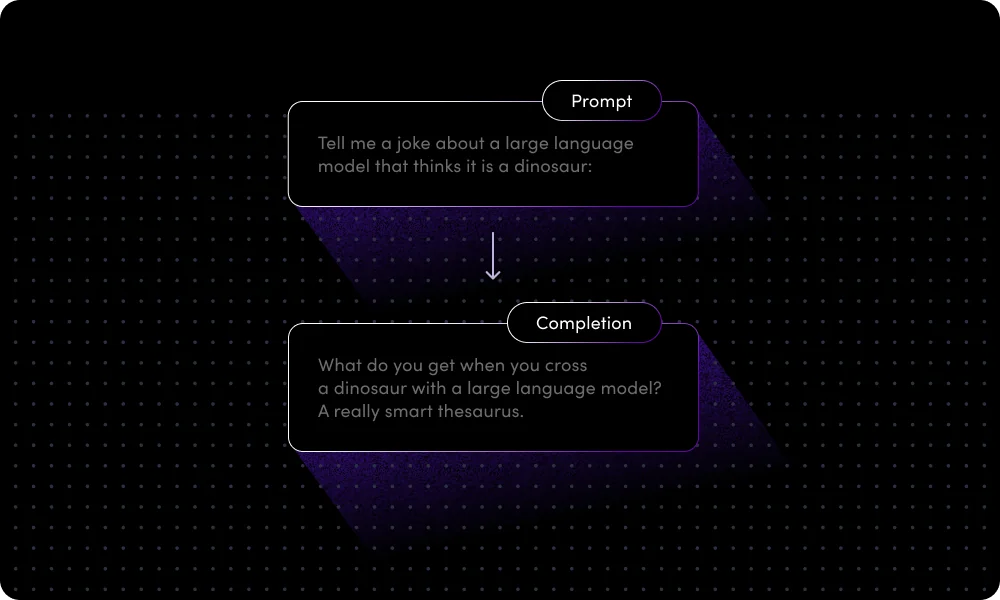

Instruct capabilities

J2’s best-in-class models offer zero-shot instruction capabilities, allowing them to be steered with natural language without the use of examples. J2’s Jumbo and Grande models have been adapted to include these capabilities. Here’s an example:

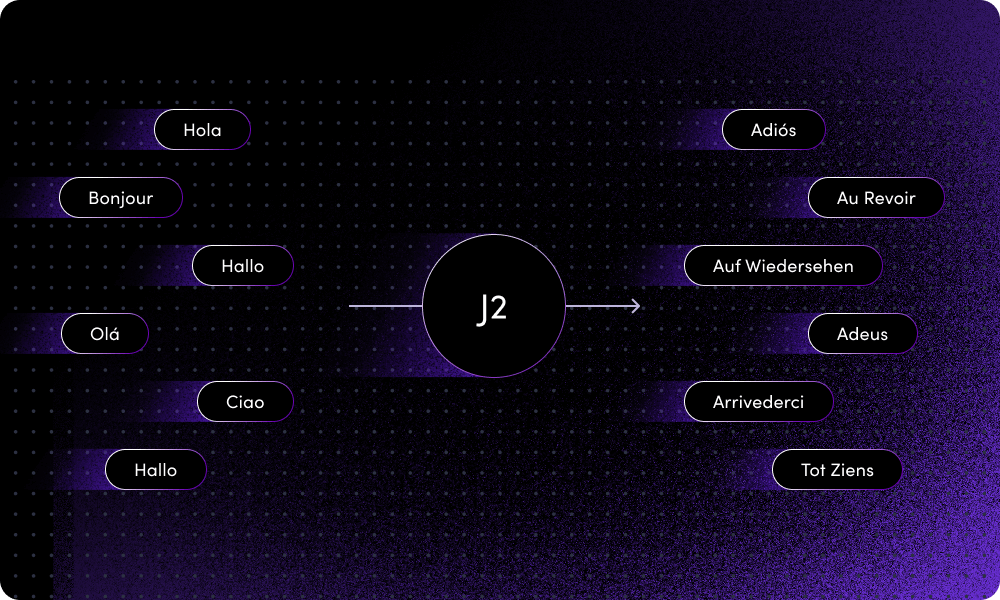

Multilingual support

J2 supports several non-English languages, including Spanish, French, German, Portuguese, Italian and Dutch.

Performance

In terms of latency, J2’s models can perform up to 30% faster than our previous models.

Take it for a spin

Jurassic-2 will be available for free until May 1st, 2023. In addition, Jurassic-2 and Jurassic-1 models are now offered under our new reduced and simplified pricing model, based on the total length of text (input + output).

All Jurassic-2 models are now available for you on our playground and API. To help you get started, we’ve collected some tips and tricks for working with the new Instruct models here.

Task-Specific APIs

Today, AI21 Labs is also proud to announce our new line of Task-Specific APIs, with the launch of the Wordtune API set, giving developers access to the language models behind our massively popular consumer-facing reading and writing apps.

Why do we need Task-Specific APIs?

General Large Language Models are incredibly powerful, and many of our customers have successfully customized them to power their applications. However, we’ve also seen that certain use-cases recur frequently among many users.

By providing developers with task-specific APIs, they can leap over much of the needed model training and fine-tuning stages, allowing them to take full advantage of our ready-made best-in-class language processing solutions.

Wordtune and Wordtune Read both use cutting-edge AI to assist users with writing and reading tasks – all while saving time and improving performance. With the release of Wordtune API, we’re giving developers access to the AI engine behind this award-winning line of applications, allowing them to take full advantage of Wordtune’s capabilities and integrate them into their own apps:

- Paraphrase – Reword texts to fit any tone, length, or meaning.

- Summarize – Condense lengthy texts into easy-to-read bite-sized summaries.

- Grammatical Error Correction (GEC) – Catch and fix grammatical errors and typos on the fly.

- Text Improvements – Get recommendations to increase text fluency, enhance vocabulary, and improve clarity.

- Text Segmentation – Break down long pieces of text into paragraphs segmented by distinct topic.

Outperforming the Competition

When it comes to paraphrasing and summarizing capabilities, Wordtune API is truly a best-in-class performer.

Summarize API

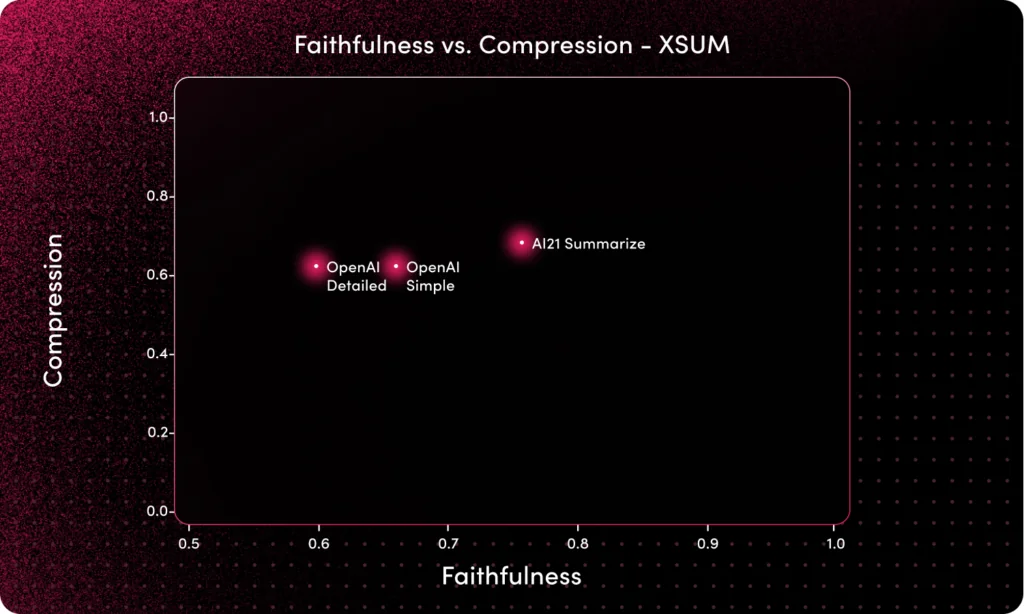

Faithfulness rates measure how factually consistent a summary is with the original text. As you can see below, our new Summarize API has reached a faithfulness rate that outperforms OpenAI’s Davinci-003 by 19%.

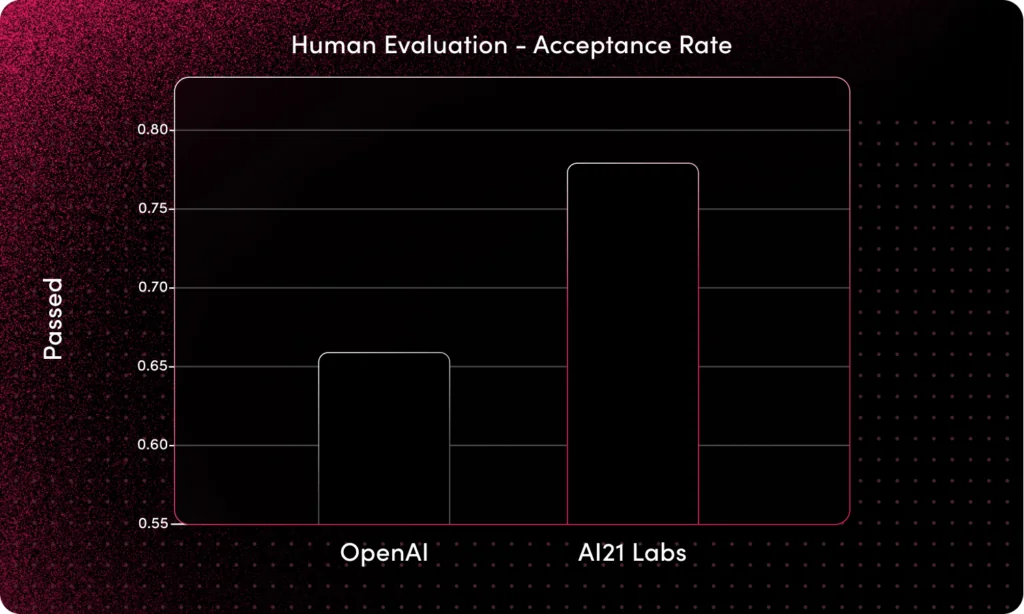

Acceptance rates measure how satisfied human evaluators are with the quality of generated summaries, and we’re proud to say that our Summarize API has achieved an acceptance rate that is 18% higher than that of OpenAI’s.

Paraphrase API

Our Paraphrase API’s latency is approximately a 1/3 of OpenAI’s.

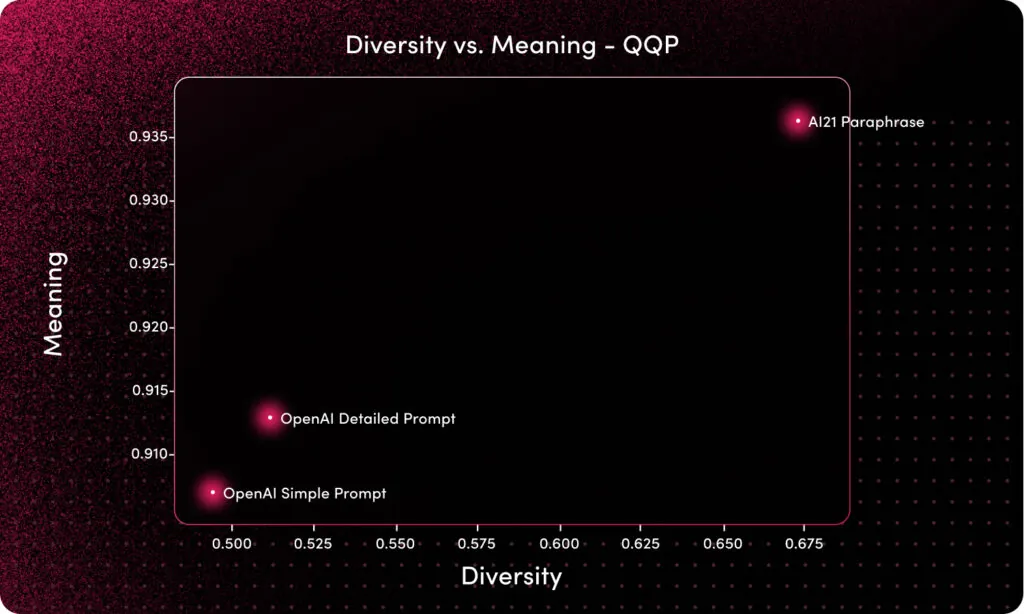

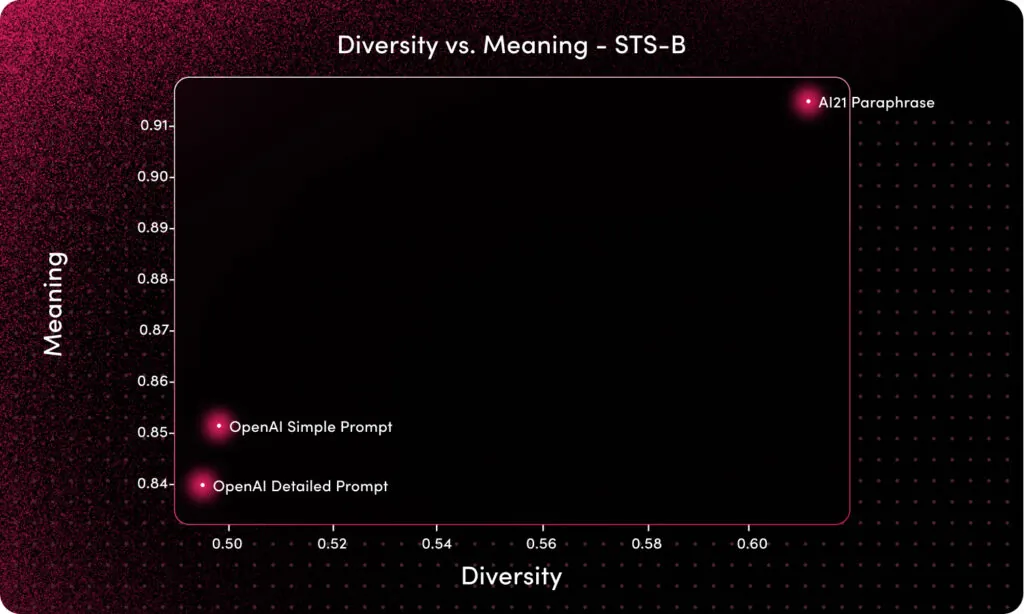

Our Paraphrase API outperforms OpenAI both in terms of diversity of results (33%) as well as meaning preservation (8%).

QQP benchmark:

STS-B benchmark:

The new releases of the Jurassic models and Task-Specific APIs both demonstrate our commitment to providing cutting-edge technology that enables our customers to build better language processing applications with ease, and deploy them into production in minutes.