Table of Contents

Introducing Contextual Answers: Unlocking Organizational Knowledge

Generative AI is engaging billions of people around the world with tools that inspire creativity – writing text, composing music, and creating digital art.

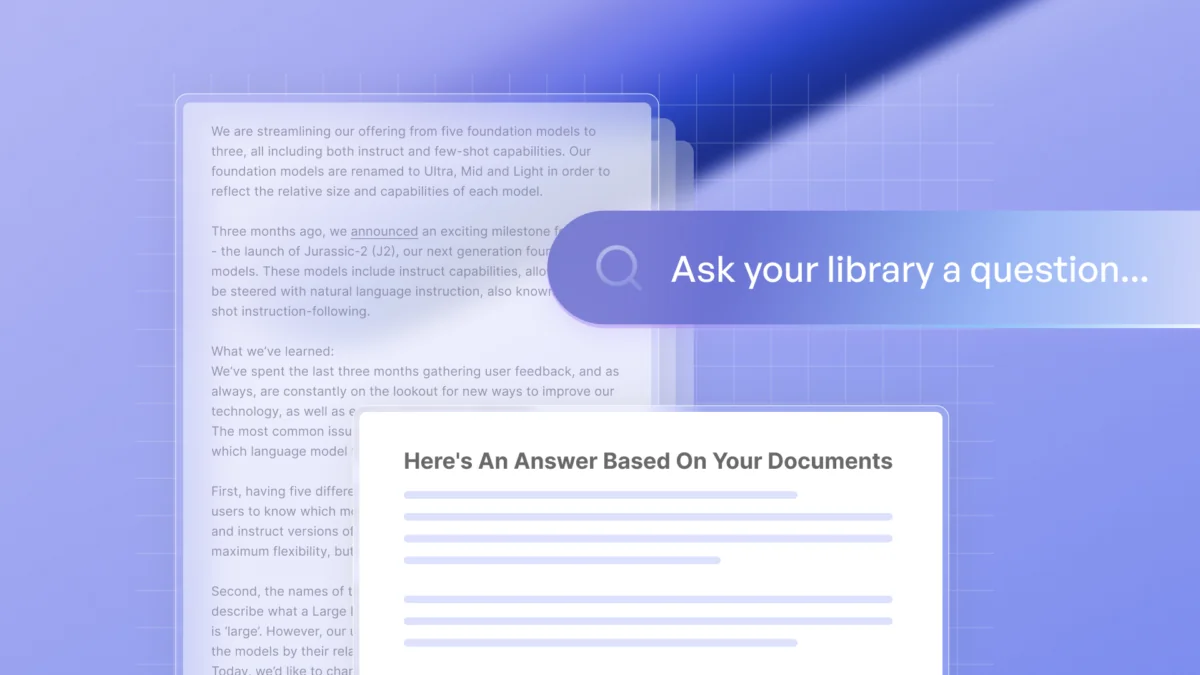

Today we’d like to introduce the evolution of GenAI from creativity to business productivity. A major step in this direction is Contextual Answers, allowing businesses to tap into their organization data with the tremendous power of generative AI.

Starting today, Contextual Answers lets businesses upload entire libraries of documents – knowledge bases, help center libraries, business reports, policies, guidelines, manuals and playbooks – and then provides an answering engine entirely grounded in the corpus of information.

Get started with Contextual Answers

Why do businesses need it?

While generative AI shows incredible promise, according to a recent KMPG survey, most businesses struggle to adopt it, citing cost, complexity and lack of the models’ specialization in their organizational data, leading to responses that are incorrect, ‘hallucinated’ or inappropriate for the context. This can cause huge liabilities and in many instances, make generative AI completely unusable for use cases.

This is why we’ve developed Contextual Answers, an end-to-end API solution where answers are designed to be completely grounded in organizational knowledge and avoid hallucinations.

We’re seeing tremendous demand from businesses that wish to leverage Contextual Answers to supercharge organizational productivity and provide superior customer experiences.

Clarivate, a leading global information services provider, partnered with us to apply Contextual Answers across their suite of library solutions, providing students, faculty and researchers answers to questions grounded in curated and trusted scholarly content.

Here are a few other examples.

- Customer Support for CRMs and Independent Software Vendors: answering end user support questions using internal organization documentation, reducing the load and cost and improving customer satisfaction

- Financial Service Institutions: assisting in the analysis of business documents such as financial reports, call logs, and past presentations, allowing analysts to cover more resources

- Education Service Providers: allowing students, teachers, and researchers to quickly find answers in large databases full of articles, books and research papers

- Legal Services and Insurance: providing the ability to quickly look up relevant legal documents, including legislation, regulations and internal compliance guidelines

- Sales and Marketing: supporting sales associates by pulling information from product documents, competitor analysis, marketing materials, and sales playbooks to answer customer queries or build pitches

- Field Technicians: enabling field technicians to quickly reference manual specific questions without having to waste time searching through countless technical documents

Walkthrough: Build a knowledge management system using Contextual Answers

As an example, let’s leverage these capabilities to create an efficient knowledge management system (KMS) for an organization. According to a recent report from Coveo, the average employee spends 3.6 hours daily searching for relevant information, including internal company policies (for example, hybrid work guidelines).

Now with Contextual Answers, you can easily deploy a full question-answering system based solely on organizational data alone. By providing employees with rapid answers backed by attributed sources, businesses can dramatically increase productivity.

Ready to get started?

Step 1: Upload your files

You can upload your files to your Library, where we offer free storage. In this example, we will upload three documents with company policies (working from abroad, hybrid work guidelines, IT security).

This can be done with a simple call using our Python SDK (or an HTTP request):

import ai21

ai21.api_key = YOUR_API_KEY

file_id = ai21.Library.Files.upload(file_path=file_path)You can also do it via our Studio platform:

You can upload a file as it is, store it in a directory (for those who like working with directories) or add labels. This can help you organize your filing system, while focusing your questions on a subset of documents.

Step 2: Ask a question

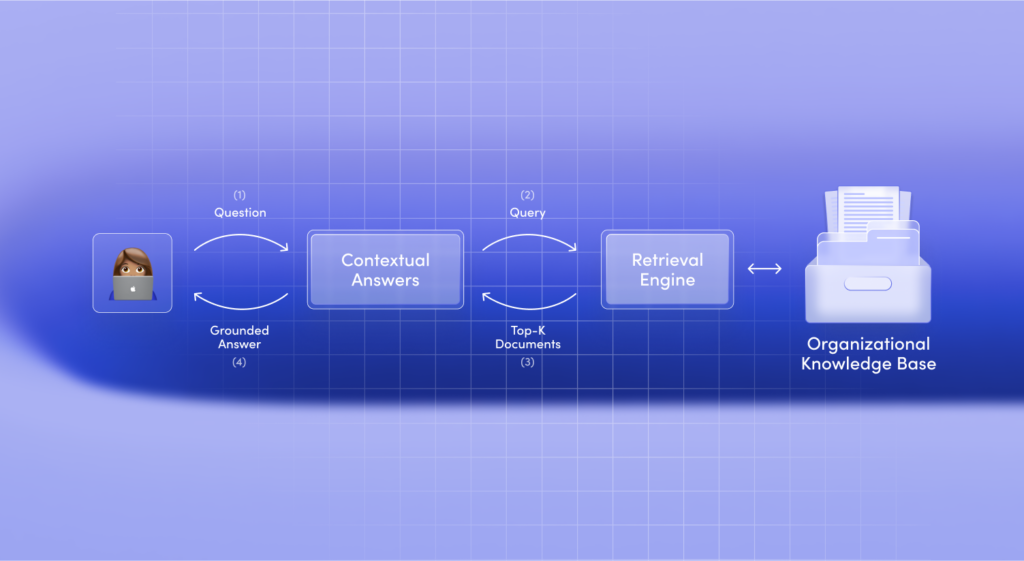

Your users can now ask a question and immediately get the answer with attribution to the relevant source. The system works as follows:

The question is used as a query for a retrieval mechanism, which searches over the entire knowledge base and retrieves the most relevant contexts.

With rapid changes occurring in work environments lately, a common question from employees is about working remotely:

response = ai21.Library.Answer.execute(question="How many days can I work from home?")The response will be:

Two days a week

Note that the full response returned from the model also contains the sources used as context.

However, if the answer to the question is not in any of the documents, the model will indicate that by returning an empty response. For instance, if we will ask the following question:

response = ai21.Library.Answer.execute(question="What's my meal allowance when working from home?")The response will indicate that the answer is not found in any of the documents within your Library.

You can also do it via our Studio platform:

If you have a large collection of documents or have your own retrieval mechanism, you may want to ask a question on a subset of your knowledge base.